Neuroscience-in-the-Wild: Memory & Sensor Fusion (NIH 10792324)

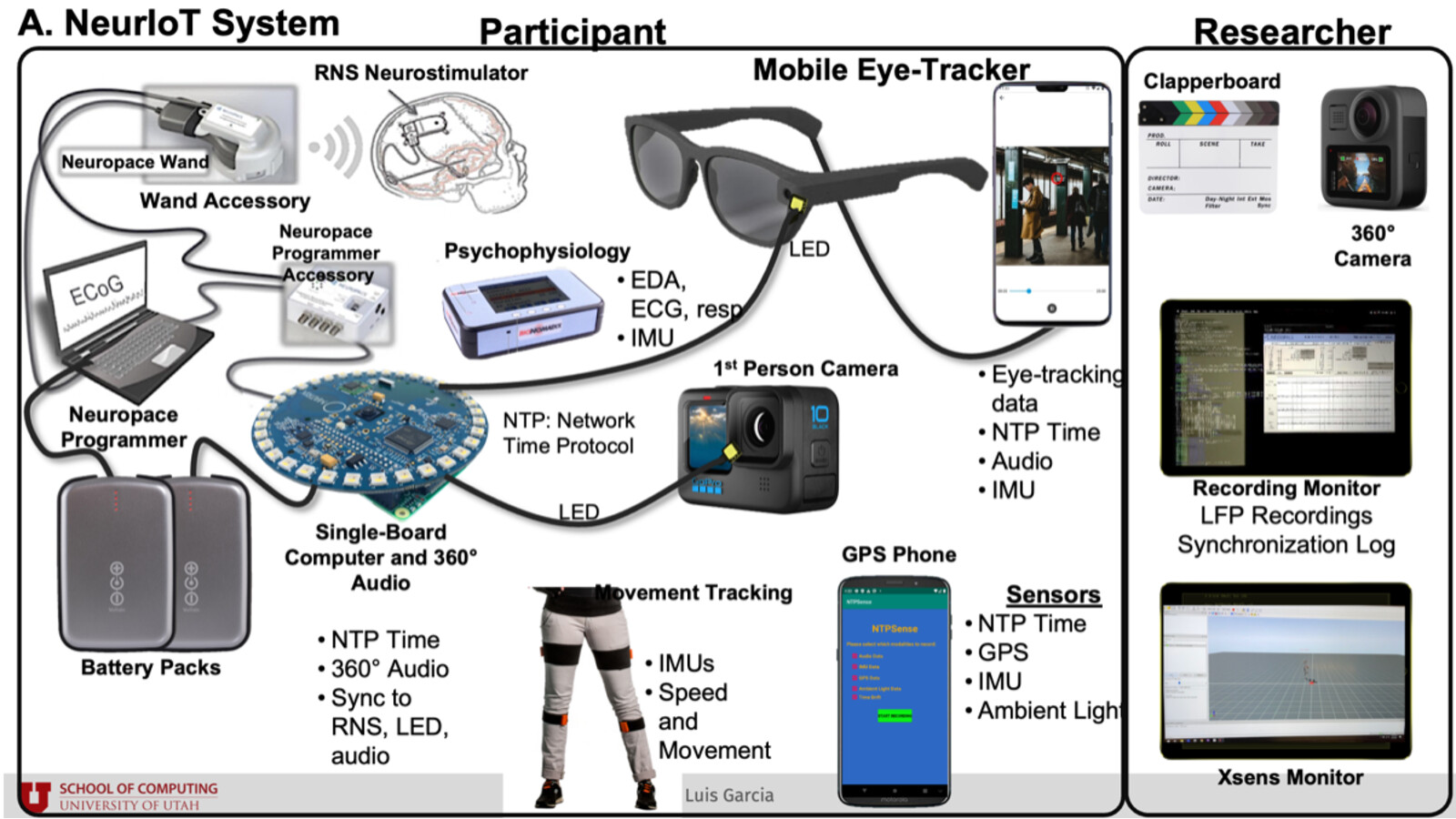

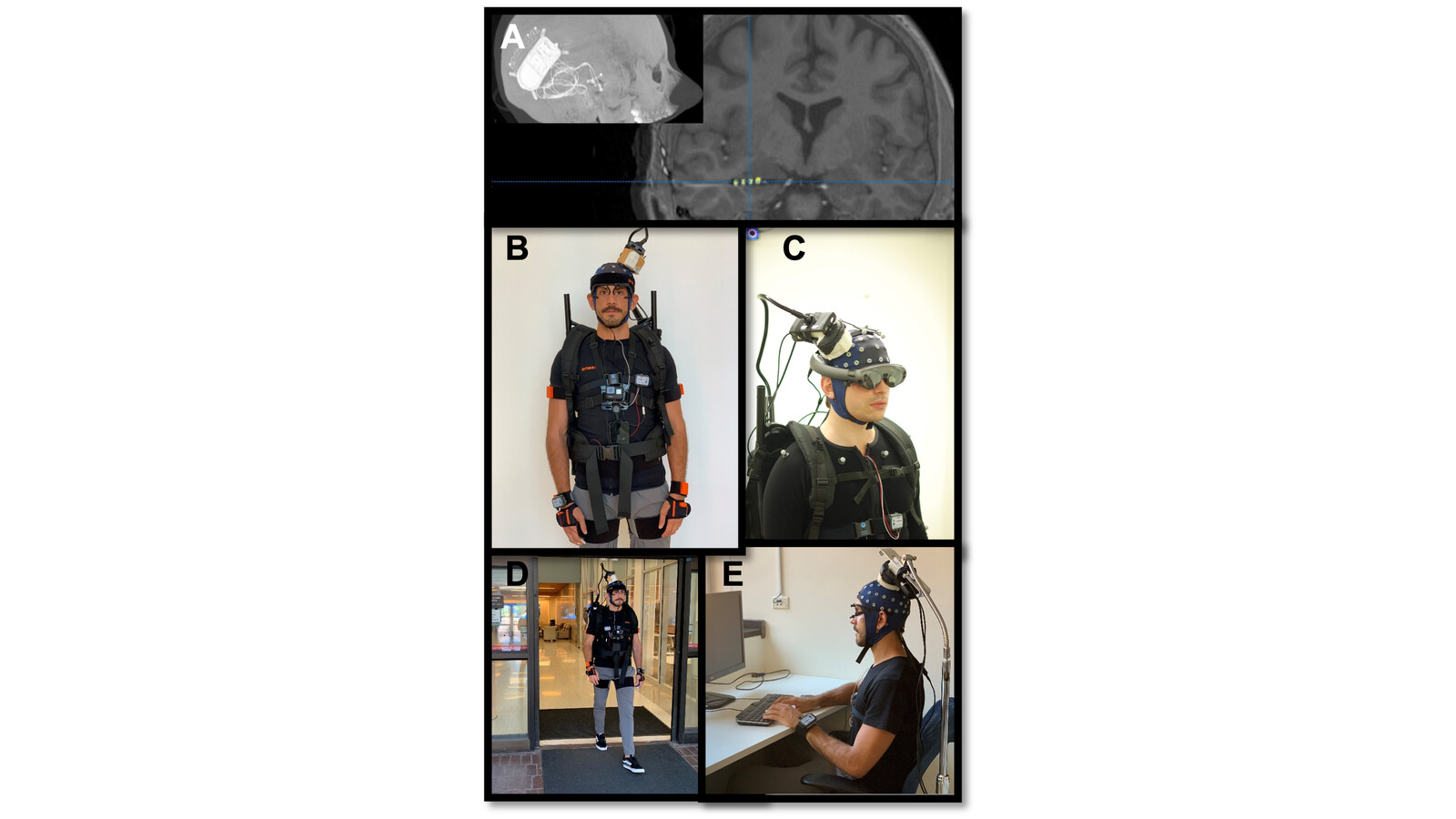

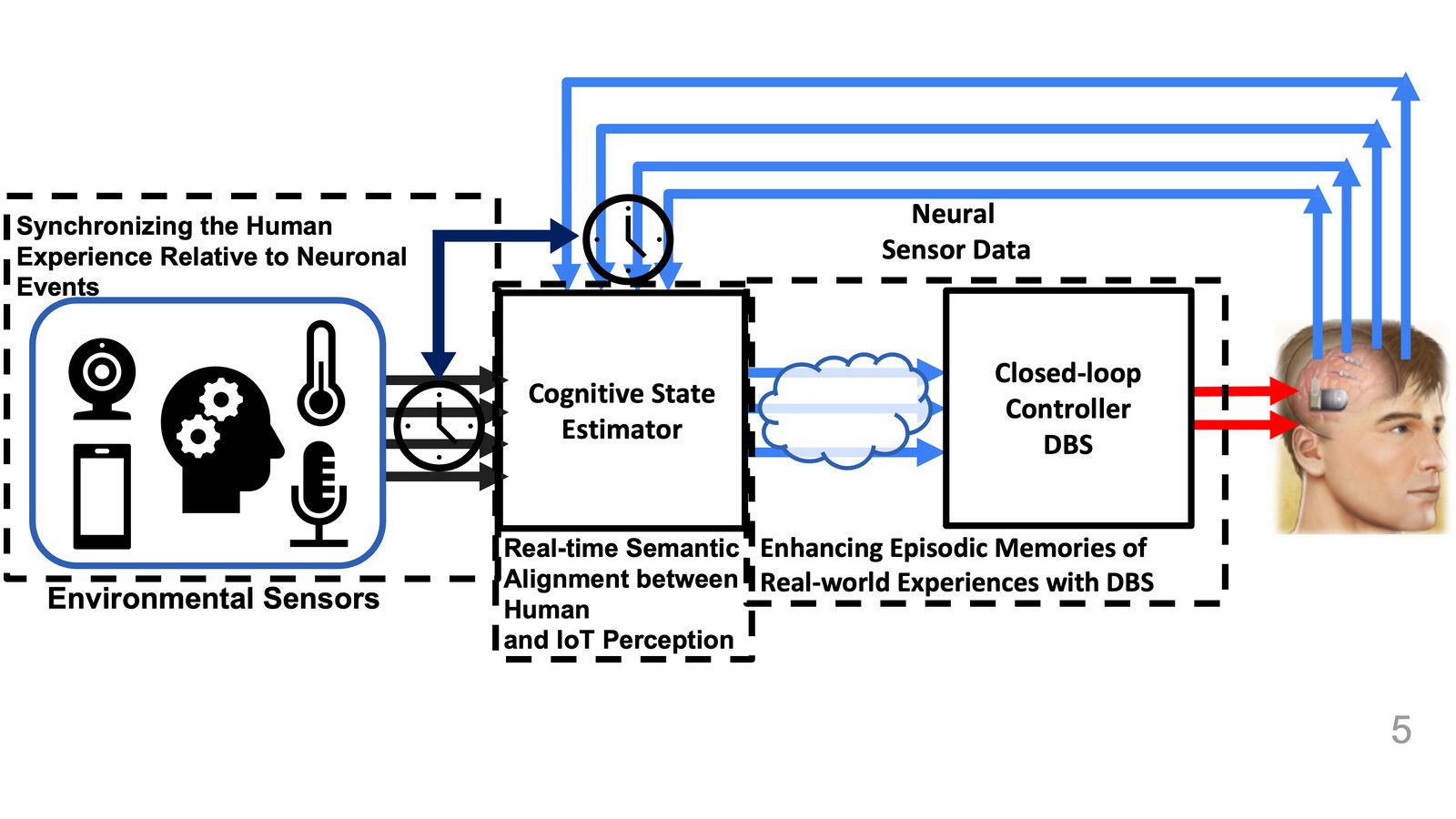

This project integrates smartphone-based experiential sensing, wearables, and intracranial neural recordings to study autobiographical memory formation during real-world behavior and to inform future memory-enhancing neuromodulation.

Project Overview

The NIH project combines continuous multimodal sensing (audio/video, GPS, IMU, eye-tracking, physiology, and self-reports) with precisely synchronized intracranial recordings from participants with chronically implanted therapeutic devices.

A core objective is to capture and analyze autobiographical memory encoding in natural settings rather than only controlled laboratory tasks. The effort includes development of the CAPTURE mobile recording app and analysis pipelines for large multimodal streams.

By linking real-world behavioral context to neural activity, the project establishes translational foundations for future closed-loop interventions aimed at improving memory outcomes in neurological disorders.

An active Trustworthy Mixed Reality thread evaluates robustness of visual tracking and context alignment pipelines that support reliable in-the-wild cognitive experimentation.

Key Capabilities

- Synchronized collection of neural, wearable, and environmental data streams during unconstrained real-world behavior

- Multimodal alignment methods for time-locked analysis across intracranial signals and sensed context

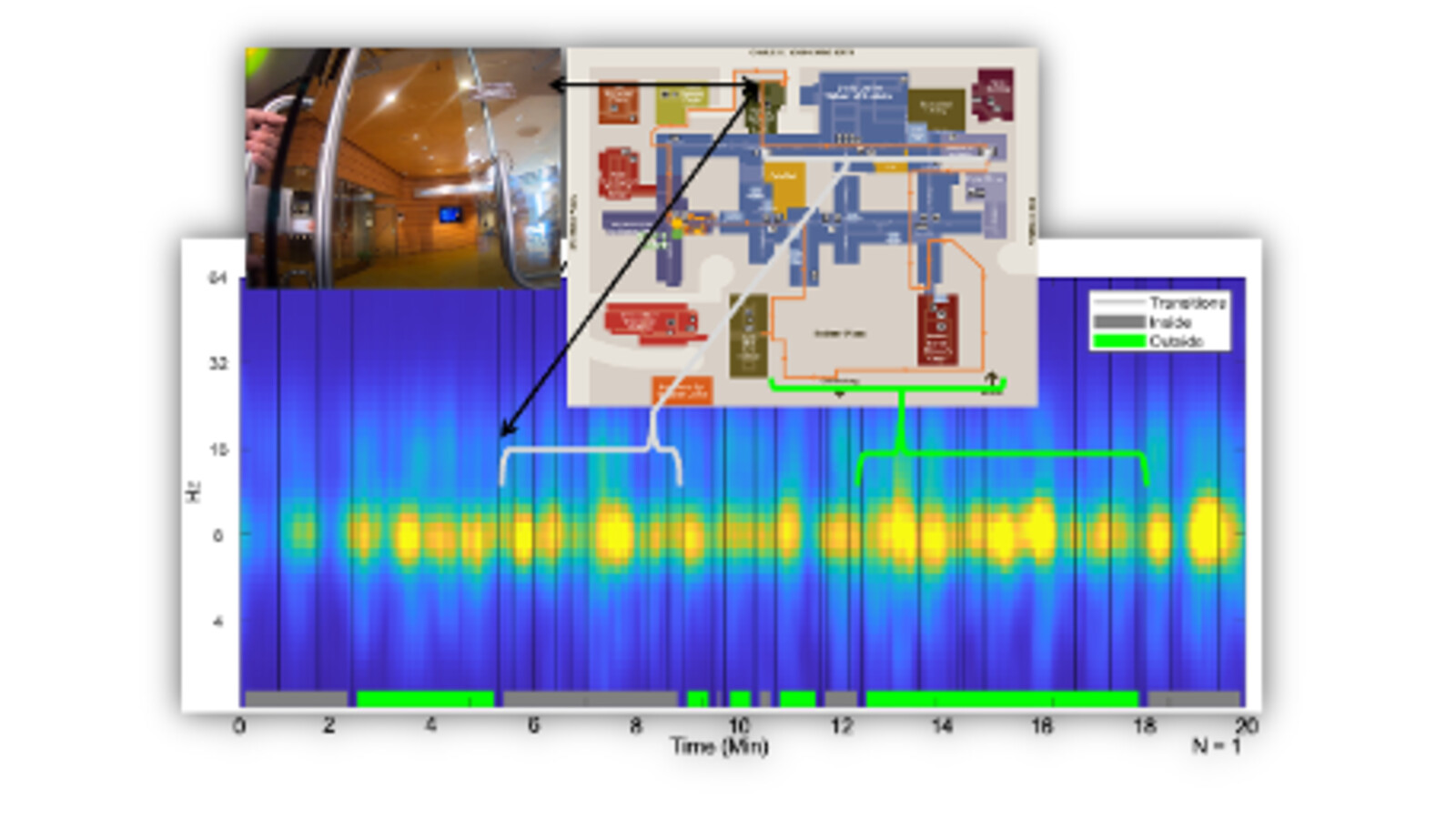

- Sensor-fusion and model-building workflows for context-shift and event-boundary detection

- End-to-end mobile tooling for in-the-wild experimental capture at scale

- Translational analysis pipeline connecting neural markers to memory-relevant behavior

- Robustness analysis for XR tracking pipelines used in real-world cognitive sensing workflows

Example Use Cases

- Studying autobiographical memory encoding and retrieval in daily-life environments

- Detecting context shifts from multimodal sensory and neural observations

- Characterizing neural signatures that can support future adaptive stimulation policies

- Evaluating robustness of real-world brain-and-sensor data fusion workflows

- Assessing trustworthy mixed reality sensing reliability for longitudinal cognitive experiments

Project Figures

Selected Publications

Detecting Context Shifts in the Human Experience Using Multimodal Foundation Models

Iris Nguyen, Liying Han, Burke Dambly, Alireza Kazemi, Marina Kogan, Cory Inman, Mani Srivastava, Luis Garcia · Proceedings of the 23rd ACM Conference on Embedded Networked Sensor Systems (2025)

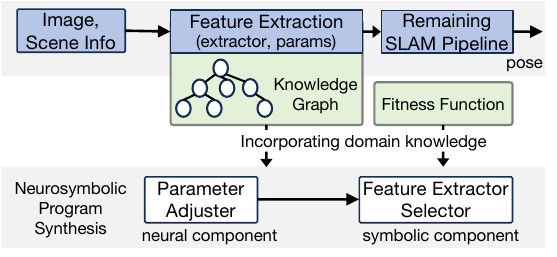

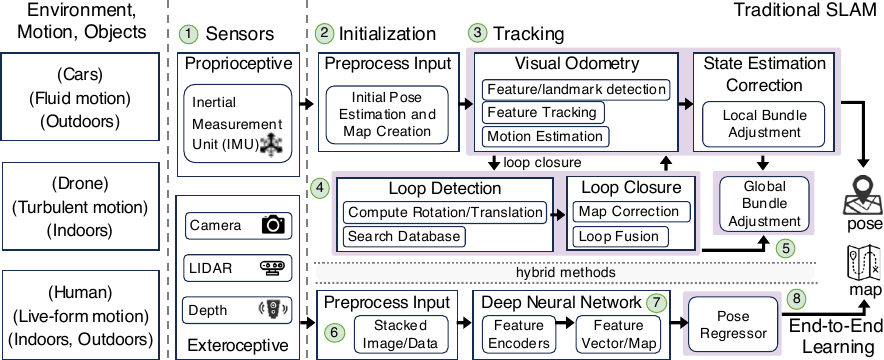

A Neurosymbolic Approach to Adaptive Feature Extraction in SLAM

Yasra Chandio, Momin A Khan, Khotso Selialia, Luis Garcia, Joseph DeGol, Fatima M Anwar · 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) (2024)

Yasra Chandio, Khotso Selialia, Joseph DeGol, Luis Garcia, Fatima M Anwar · ACM Transactions on Sensor Networks (TOSN) (2025)